WS12026MSc2: Difference between revisions

| (22 intermediate revisions by 2 users not shown) | |||

| Line 4: | Line 4: | ||

[[File:WS12026MSc2banner.jpg|850px]] | [[File:WS12026MSc2banner.jpg|850px]] | ||

<br> | |||

Excerpt from the brief ( https://docs.google.com/document/d/1lwgkOkwLq_MkuHcLVb7TmrxsUspAnb1R3pm0jOyIj8c/edit): | |||

<br> | <br> | ||

The Interactive Architecture Prototypes (IAP) workshop focuses on skill building in Artificial Intelligence (AI) supported Design-to-Robotic-Production and -Operation (D2RP&O) methods for the development of architectural hybrid assemblies ranging from micro levels, as material systems, to meso and macro levels as building components and buildings. In this context, hybrid assemblies will be explored by designing and robotically producing and/ or operating a structure that consists of various components assembled into an integrated larger whole. | The Interactive Architecture Prototypes (IAP) workshop focuses on skill building in Artificial Intelligence (AI) supported Design-to-Robotic-Production and -Operation (D2RP&O) methods for the development of architectural hybrid assemblies ranging from micro levels, as material systems, to meso and macro levels as building components and buildings. In this context, hybrid assemblies will be explored by designing and robotically producing and/ or operating a structure that consists of various components assembled into an integrated larger whole. | ||

| Line 17: | Line 19: | ||

=='''APPROACH'''== | =='''APPROACH'''== | ||

In order to address these challenges, the design focuses on developing reconfigurable, interactive furniture with integrated AI-supported embedded lighting for the Troll station, which is basically a collection of containers connected to each other. The task will focus on 1-3 containers and their potential for spatial and environmental reconfiguration using a Voronoi-based approach and AI-assisted illumination method. Both, will be supported by hands-on tutorials and knowledge transfer from extraterrestrial to terrestrial environments and vice versa. | |||

<br> | |||

<br> | |||

[[File:TrollStation.jpg|850px]] | [[File:TrollStation.jpg|850px]] | ||

<br> | |||

<br> | |||

The tutorials focus on applications of D2RP&O methods: | |||

The | <br> | ||

1. Voronoi-based D2RP | |||

<br> | |||

The container is fitted with reconfigurable Voronoi-based componets that serve various 24/7 changing uses ranging from work to relation and leisure. Considering that 2-6 users are inhabiting 1-3 containers of 6/2.5/2.5m each the challenge is to design a Voronoi-based reconfigurable furniture that has integrated LED lighting. Reconfiguration relies mainly on folding, with facets of the Voronoi-cells creating variable seating, sleeping, working areas. The facets have integrated wiring and LEDs and are robotically 3D printed using D2RP methods and recycled plastic. | |||

<br> | |||

<br> | |||

2. AI-supported D2RO | |||

<br> | |||

To simulate less extreme lighting conditions indoors, the weather map data from Delft, Netherlands is integrated with a synthetic dataset that links ambient illuminance and basic color temperature, i.e. correlated colour temperature (CCT) to several physiological responses. Key assumptions are that higher illuminance creates increased heart rate, decreased HRV, smaller pupil, lower blink rate, higher skin conductance and respiration, while cooler light induces minor HR increase, HRV decrease, while diurnal baseline rhythms create slight circadian HR variation. | |||

<br> | |||

<br> | |||

Prior to the tutorial students are asked to have installed Python, Jupyter notebooks, and some common libraries including Scikit Learn: https://digipedia.tudelft.nl/tutorial/jupyter-notebook-installation-and-overview/?tab=chapter-0 | |||

<br> | |||

<br> | |||

The tutorial has limited discussion of the theory, hence, it is recommend to review content from the Computational Intelligence for Integrated Design site: | |||

Probability and Statistics: https://digipedia.tudelft.nl/course/computational-intelligence-for-integrated-design/?tab=chapter-1 | |||

Supervised Learning, and Neural Networks: https://digipedia.tudelft.nl/course/computational-intelligence-for-integrated-design/?tab=chapter-4 | |||

<br> | |||

Additional reading: Machine Learning: An introduction by Kevin P. Murphy | |||

<br> | <br> | ||

<br> | <br> | ||

| Line 27: | Line 51: | ||

=='''DELIVERABLES'''== | =='''DELIVERABLES'''== | ||

The application of the AI-supported D2RP&O process is demonstrated with respect to: | |||

<br> | |||

1. Additive D2RP: The development of a reconfigurable, structurally and functionally optimized 3D printable structure; | |||

<br> | |||

2. AI-supported D2RO: The development and integration of interactive/responsive lighting to accommodate individual needs. | |||

<br> | |||

Students work in groups of ±5 members with members taking specific roles focusing on either D2RP, D2RO and/ or their integration. The computational design is informed by programatic, structural, operational, and materialization requirements. | |||

<br> | |||

<br> | |||

Additional information is available in the brief (https://docs.google.com/document/d/1lwgkOkwLq_MkuHcLVb7TmrxsUspAnb1R3pm0jOyIj8c/edit). | |||

<br> | <br> | ||

<br> | <br> | ||

| Line 43: | Line 76: | ||

<br>'''[[project03:WS12026MSc2_G3Design|Group 3]]''' | <br>'''[[project03:WS12026MSc2_G3Design|Group 3]]''' | ||

<br>'''[[project04:WS12026MSc2_G4Design|Group 4]]''' | <br>'''[[project04:WS12026MSc2_G4Design|Group 4]]''' | ||

<br> | <br> | ||

<br> | <br> | ||

| Line 61: | Line 90: | ||

---- | ---- | ||

=='''REFERENCES'''== | =='''REFERENCES'''== | ||

Video: https://www.youtube.com/watch?v=qYNweeDHiyU | |||

<br> | |||

Paper: https://doi.org/10.1080/24751448.2024.2322927 | |||

<br> | <br> | ||

<br> | <br> | ||

---- | ---- | ||

=='''TEMPLATES'''== | |||

Report: | |||

<br>https://docs.google.com/document/d/1fNNps7UfgIfoOH8G0ar-5Bzz26-up8Y4/edit | |||

<br><br> | |||

Video & instructions: | |||

<br>https://drive.google.com/file/d/1eb58-dR2yR7Ulc5dQq765uEKmdqVlVhV/view | |||

<br> - In the zip file, check the file named 'AI_Msc2_Video Template. prproj' file, other files are just reference images and videos used for the template. | |||

<br> - Replace the movies to keep the current effects on the video. | |||

<br> - You will see the following titles on the template: '3D Printing', 'Design', 'Node', and 'Robotic Arm': These are just the sections in the template. You can also reach those sections by double-clicking from the general video section, which is 'Movie 1' | |||

<br> - If you do not have the font used on the video on your laptop, upload those to your laptop/computer: settings > control panel > fonts. You(at least Windows users) can copy-paste the font to the 'fonts folder' on your laptop. | |||

<br><br> | |||

Archive: | |||

<br>https://drive.google.com/file/d/1MsjV7GPDhpol1BUl02_tLhqz6iUiKADP/view | |||

<br><br> | |||

----- | |||

Latest revision as of 12:50, 23 March 2026

Workshop MSc 2 (2026): Interactive Furniture for Arctic Environments

Excerpt from the brief ( https://docs.google.com/document/d/1lwgkOkwLq_MkuHcLVb7TmrxsUspAnb1R3pm0jOyIj8c/edit):

The Interactive Architecture Prototypes (IAP) workshop focuses on skill building in Artificial Intelligence (AI) supported Design-to-Robotic-Production and -Operation (D2RP&O) methods for the development of architectural hybrid assemblies ranging from micro levels, as material systems, to meso and macro levels as building components and buildings. In this context, hybrid assemblies will be explored by designing and robotically producing and/ or operating a structure that consists of various components assembled into an integrated larger whole.

FRAMEWORK

The focus is on an extreme environment, Antarctica, where conditions include extremely cold, dry, and windy polar desert characterized by severe temperatures (ranging from -80 to -20), strong katabatic winds, minimal precipitation, and very low humidity; extreme light cycles with months of continuous daylight or darkness, high UV exposure, reduced oxygen levels. In this context the Norwegian research station in Antarctica, Troll, consisting of containers, is struggling with the limited space that confines activities and limits various and changing needs of inhabitants with respect to work, rest, and leisure. Furthermore, health is highly sensitive to lighting conditions because of their impact on the circadian rhythms, sleep, hormone cycles, visual performance, mood, and spatial orientation. Inadequate or poorly tuned lighting may impair alertness, mental health, immunity, and safety - making advanced, tunable, spectrum-controlled lighting systems essential.

APPROACH

In order to address these challenges, the design focuses on developing reconfigurable, interactive furniture with integrated AI-supported embedded lighting for the Troll station, which is basically a collection of containers connected to each other. The task will focus on 1-3 containers and their potential for spatial and environmental reconfiguration using a Voronoi-based approach and AI-assisted illumination method. Both, will be supported by hands-on tutorials and knowledge transfer from extraterrestrial to terrestrial environments and vice versa.

The tutorials focus on applications of D2RP&O methods:

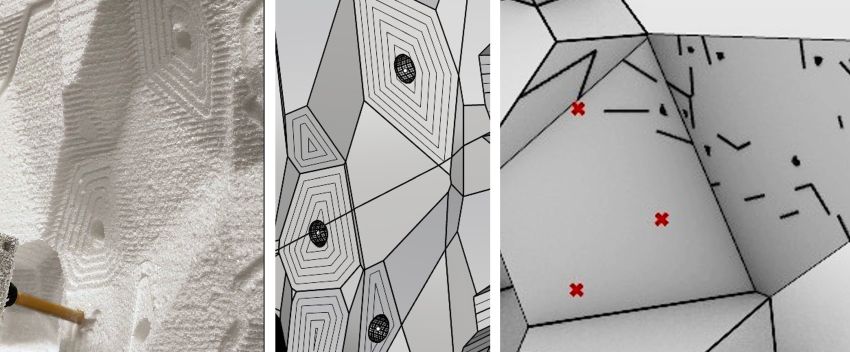

1. Voronoi-based D2RP

The container is fitted with reconfigurable Voronoi-based componets that serve various 24/7 changing uses ranging from work to relation and leisure. Considering that 2-6 users are inhabiting 1-3 containers of 6/2.5/2.5m each the challenge is to design a Voronoi-based reconfigurable furniture that has integrated LED lighting. Reconfiguration relies mainly on folding, with facets of the Voronoi-cells creating variable seating, sleeping, working areas. The facets have integrated wiring and LEDs and are robotically 3D printed using D2RP methods and recycled plastic.

2. AI-supported D2RO

To simulate less extreme lighting conditions indoors, the weather map data from Delft, Netherlands is integrated with a synthetic dataset that links ambient illuminance and basic color temperature, i.e. correlated colour temperature (CCT) to several physiological responses. Key assumptions are that higher illuminance creates increased heart rate, decreased HRV, smaller pupil, lower blink rate, higher skin conductance and respiration, while cooler light induces minor HR increase, HRV decrease, while diurnal baseline rhythms create slight circadian HR variation.

Prior to the tutorial students are asked to have installed Python, Jupyter notebooks, and some common libraries including Scikit Learn: https://digipedia.tudelft.nl/tutorial/jupyter-notebook-installation-and-overview/?tab=chapter-0

The tutorial has limited discussion of the theory, hence, it is recommend to review content from the Computational Intelligence for Integrated Design site:

Probability and Statistics: https://digipedia.tudelft.nl/course/computational-intelligence-for-integrated-design/?tab=chapter-1

Supervised Learning, and Neural Networks: https://digipedia.tudelft.nl/course/computational-intelligence-for-integrated-design/?tab=chapter-4

Additional reading: Machine Learning: An introduction by Kevin P. Murphy

DELIVERABLES

The application of the AI-supported D2RP&O process is demonstrated with respect to:

1. Additive D2RP: The development of a reconfigurable, structurally and functionally optimized 3D printable structure;

2. AI-supported D2RO: The development and integration of interactive/responsive lighting to accommodate individual needs.

Students work in groups of ±5 members with members taking specific roles focusing on either D2RP, D2RO and/ or their integration. The computational design is informed by programatic, structural, operational, and materialization requirements.

Additional information is available in the brief (https://docs.google.com/document/d/1lwgkOkwLq_MkuHcLVb7TmrxsUspAnb1R3pm0jOyIj8c/edit).

COORDINATORS, TUTORS & ASSISTANTS

Henriette Bier, Arwin Hidding, Lisa-Marie Mueller & Vera Laszlo

STUDENTS

Group 1

Group 2

Group 3

Group 4

DOCUMENTS

Schedule: https://docs.google.com/spreadsheets/d/1wdQEvPvv7gUu-ZV9XkEZfpRcdz9t13LKzSTBah937-0/edit

Brief: https://docs.google.com/document/d/1lwgkOkwLq_MkuHcLVb7TmrxsUspAnb1R3pm0jOyIj8c/edit

Student list: https://docs.google.com/spreadsheets/d/1AOVUd0Er4L60kd4_d7-1HiplAooonuL7gRpy7-E8srU/edit

REFERENCES

Video: https://www.youtube.com/watch?v=qYNweeDHiyU

Paper: https://doi.org/10.1080/24751448.2024.2322927

TEMPLATES

Report:

https://docs.google.com/document/d/1fNNps7UfgIfoOH8G0ar-5Bzz26-up8Y4/edit

Video & instructions:

https://drive.google.com/file/d/1eb58-dR2yR7Ulc5dQq765uEKmdqVlVhV/view

- In the zip file, check the file named 'AI_Msc2_Video Template. prproj' file, other files are just reference images and videos used for the template.

- Replace the movies to keep the current effects on the video.

- You will see the following titles on the template: '3D Printing', 'Design', 'Node', and 'Robotic Arm': These are just the sections in the template. You can also reach those sections by double-clicking from the general video section, which is 'Movie 1'

- If you do not have the font used on the video on your laptop, upload those to your laptop/computer: settings > control panel > fonts. You(at least Windows users) can copy-paste the font to the 'fonts folder' on your laptop.

Archive:

https://drive.google.com/file/d/1MsjV7GPDhpol1BUl02_tLhqz6iUiKADP/view